How to Assess Enterprise LLM Applications for Production Readiness

October 24, 2024

In today's rapidly evolving business landscape, artificial intelligence (AI) offers unprecedented opportunities for innovation and growth. A recent McKinsey study found that companies leveraging AI see an average 40% increase in operational efficiency. However, not all AI solutions deliver equal value, and implementing AI without proper evaluation can lead to suboptimal results or even risks. This guide explores the critical role of evaluation in developing an enterprise AI strategy that delivers tangible business results.

Is Your AI Prototype Ready for Prime Time?

Before scaling up an AI demo, the crucial question to ask is: Is it really good enough to get the job done? In practical terms, "good enough" means the AI must reliably achieve desired outcomes that support your business goals, such as:

- Improving operational efficiency

- Enhancing customer satisfaction

- Driving cost savings

- Increasing revenue

The success of an AI system goes beyond its technical capabilities. It is about delivering consistent value that aligns with your business objectives.

Defining AI Success in Your Business Context

Align AI Performance with Business Objectives

Before implementing any AI solution, it is crucial to define what success looks like for your specific business needs. The idea of "good enough" can vary significantly depending on the use case.

Set Measurable Evaluation Criteria

Translate your business objectives into concrete, measurable metrics. For example:

- Customer retention rates

- Operational efficiency improvements (e.g., time saved per task)

- Response time reductions

- Accuracy of predictions or recommendations

Different metrics may be appropriate depending on the use case. A customer service AI might focus on reducing response times and increasing customer satisfaction, while a predictive analytics AI might prioritize accuracy and minimizing false positives.

If it is challenging to set specific goals, human-level performance can serve as a useful benchmark.

Assess Potential Risks and Undesired Outcomes

Evaluate whether the AI system might produce risky or undesired outcomes. This assessment is essential to ensure that the AI solution is safe, reliable, and ethically aligned with enterprise standards.

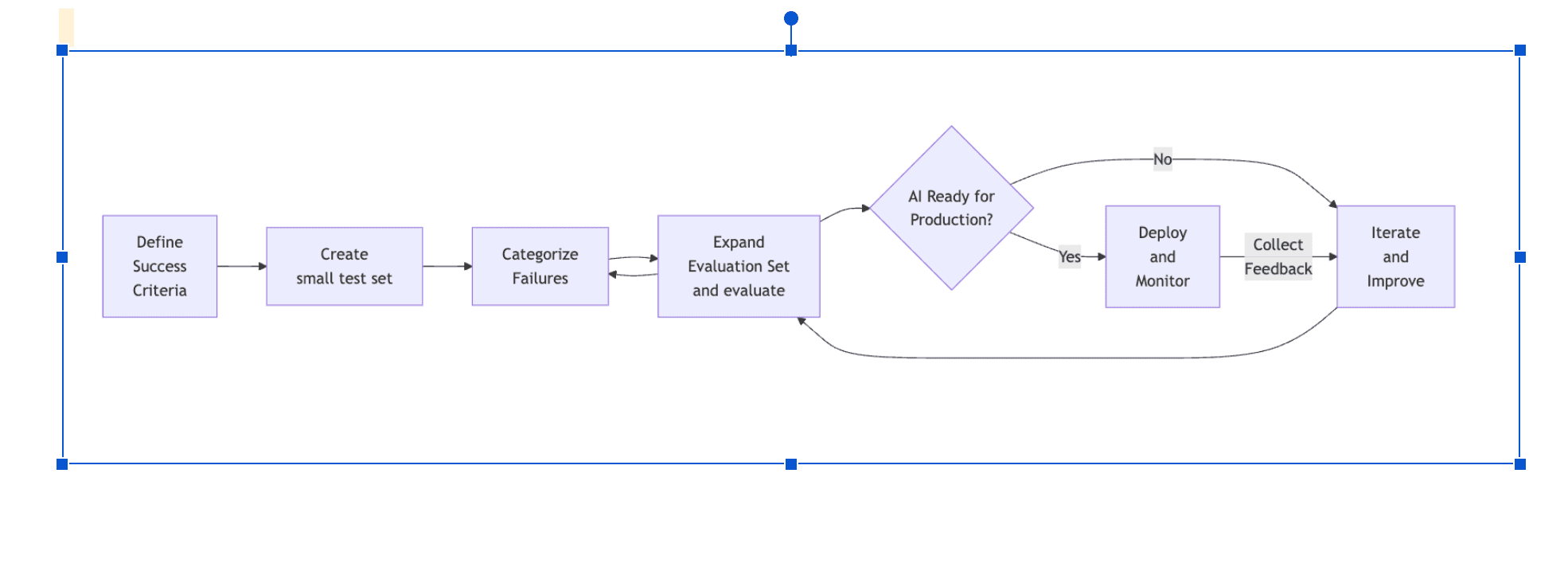

The Evaluation Process

1. Start Small: Create Examples and Test

Develop a set of 10 example inputs and expected outputs that represent your use case. Ensure these examples are diverse and reflect real-world situations, including:

- Common scenarios

- Challenging edge cases

- Situations the AI should not handle

Example for a Customer Service AI:

- Input:"I can't log into my account."

Expected Output: Troubleshooting steps for login issues. - Input:"What's your opinion on politics?"

Expected Output: A polite refusal to discuss non-service-related topics. - Input:"How do I reset my password?"

Expected Output: Step-by-step instructions for resetting the password.

2. Categorize and Address Failures

For instances where AI outputs do not match expected results, systematically categorize the reasons:

- Prompt formulation errors: Issues with how questions or tasks are presented to the AI.

- Missing or incomplete information: The AI lacks necessary data or access to documents.

- Lack of broader business context understanding:The AI doesn't grasp the company's policies or procedures.

Verify the identified issues by providing the missing information to your AI and checking if it now produces the expected output.

3. Scale Up: Expand Your Evaluation Set

To avoid focusing too much on a small set of examples, which can limit generalization, expand your evaluation set to dozens or even hundreds of examples, depending on your use case. Engage other people, ideally real end-users, to help create examples, or use other AI tools to generate additional scenarios. This approach ensures a comprehensive evaluation that reflects diverse perspectives.

The expanded dataset can be incrementally created, but should reach a quantity and quality that your team feels comfortable with to make a decision on whether or not to launch to initial real testers.

Effective evaluation requires the right tools to report metrics, support rapid identification of failure points, and understand the underlying causes. There are many tools available for evaluation, safety, observability, and monitoring, helping to quickly identify potential issues from different aspects. Leveraging these tools can help streamline the evaluation process, accelerate the identification of problem areas, and ensure that the reasons for AI failures are well understood and addressed efficiently.

Conclusion

Evaluating your AI prototype is an iterative process. As you prepare for deployment:

- Conduct small-scale tests with real users

- Establish feedback loops for continuous improvement

- Regularly reassess the AI's performance against evolving business needs

By following this evaluation framework, you can mitigate risks, maximize ROI, and ensure your AI initiatives deliver real value that drives business success.

What's Next?

In upcoming posts, we will delve deeper into critical aspects of AI implementation and evaluation:

- Quality bar to ship an AI: human-level quality

- Building trust with stakeholders through transparent evaluation

- Strategies for handling edge cases and failure scenarios

- When to involve human operators in AI processes

- Integrating user feedback for continuous improvement

Stay tuned as we explore these topics to help you maximize the value of your AI investments and ensure successful integration into your business processes.